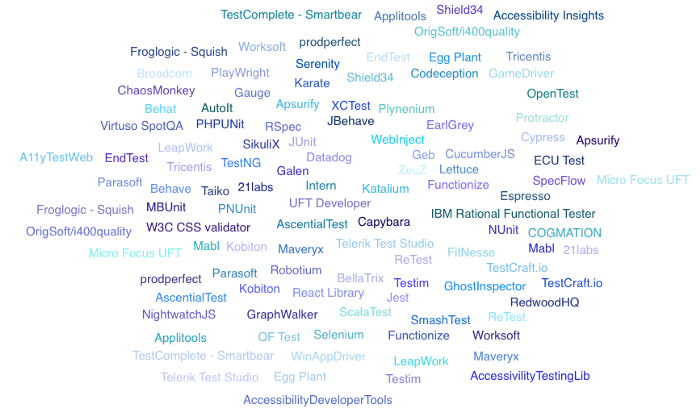

The Cloud

The goal of this blog is not to collect an inventory of all test automation platforms, services, frameworks and tools nor to evaluate them, but rather take a birds eye view of the status quo of what this industry provides, to see if there is a pattern and to gauge the direction the industry is moving towards!. This includes both paid and open source ones.

The overall idea, is to see, what is missing, what could be the next logical step and if it is possible to have all in once place via api , like AWS of Testing, i.e, 360° Automation, so customers can pick and choose what they want, switch easily, integrate with all type of providers and make their own custom solutions.

Part I of the series, covers the following

Part II & III, possibly IV, will cover,

From what I have gathered, following pattern has emerged.

AI (Artificial Intelligence) is the key word and code-less, no-code or low-code automation, record replay, automation test code generation using UI building blocks’ or without, seems to be the norm.

Self Healing

Self healing, where automated test, fix itself based on new changes to the app, using smart locators and centralised page objects store seems to be the trend.

Smart Locators or Page Objects recognition

Elements are auto discovered using AI based advanced object recognition or replaced by custom ids, stored in central store and used in record, replay.

Auto Generation of Test Code

Either by running an agent as part of your application or by other means using AI/ML, automation code is generated by the system, to reflect actual user journey or possible combinations, which then can be repeatedly run on the providers platform.

Runners

Most platforms revenue model is based on #test cases #agents used to run the test and either they have their own cross browser & device solution or allow you to connect to external cross browser & device providers.

Desktop — Mobile — Native Apps — Api — Desktop Apps-Embedded Systems — 2D/3D Game-ERP

Most services provide automation solution for one or few of the above. New trend here is the automation solution for 2D/3D gaming and Bot testing.

These are provided either Platform as a Service (PaaS) or Software as a Service (SaaS).

These services are promoted as programming free or code-less, low-code or no-code. But some of the platforms allow adding your own automation code or reuse existing code to augment code-less or auto generated code.

This is attractive to small to medium level companies, or individual teams who would need this solution as per their use case or due to lack of resources with programming skill, but when it comes to enterprise level companies, we need to wait and see how code-less solution is going to work!

Considering that self-learning process is a component of AI and data is the key for getting more accurate AI/ML outcome. If we can leverage an enterprise Data Lake and a community-based Data Lake as a continuous feed to the self-healing models, accuracy of these self will increase over time.

Frameworks

It is important to maintain different language bindings of test automation frameworks in any organisation. The reason is, in order to achieve one team mentality and move towards the same goal, both the product and automation has to be written in the same language. If you follow the philosophy of ‘pick your automation framework’s language to be the same as your product’s language, then everyone can contribute to both sides of the world. On the other hand, say if your product is written in Java and your automation framework’s language is in C#, this is going to grow a wall between the test and dev ‘mindset’, and people are going to thrown things over the wall to each other. There wouldn’t be a one team, one goal. This will be detrimental to the core idea of CI/CD .

Having synergy between dev team and QE team tool set will help organization to achieve Quality built-in approach as Dev team are more likely to use the testing tools and frameworks.

Recent trend in test automation frameworks, is the evolution of non-selenium based frameworks. These frameworks really touch the core of the productivity of developers. While this market is still evolving, there are mixed reactions, especially due to lack of certain features like cross device functionalities to certain intricate features like opening a page on a new tab.

Due to this, teams may be in need to maintain two different frameworks, one for faster feedback, one for cross browser & device coverage.

Nevertheless, these frameworks are going to stay here, for the benefit of all and I see, they are continuously evolving, based on feedback and need.

Selenium 4 is around the corner, with new features like intercepting requests, modifying response, emulating network conditions, capturing performance metrics, enhanced grid, etc, without having to use a proxy, would result in further consolidation of UI automation framework domain. Selenium 4 may make few other frameworks irrelevant, like what happened to PhantomJS.

Accessibility

Accessibility is an important topic in automation as being inclusive is good for humanity and also for the business. Close your eyes and try to navigate through a website using an accessibility tool, you will realise, how difficult it is to use a website that is accessibility broken. There are many open source frameworks for accessibility, but scattered around different language bindings. It would be useful, if accessibility validation become part of major automation framework itself.

Prodperfect – Using production data to auto generate test automation code for business critical workflows. This is different approach to test automation, possibly a good fit identifying regression cases to be run, as they would be the exact flows of how customers were using a site.

This is an interesting space which has matured and at the same time evolving and has the potential to dramatically change, due to recent trend, in terms of cost and services provided.

The differentiating patterns are,

Most providers are also touching on Performance domain, bubbling up performance metrics of browser memory and cpu, access to metrics like firstPaint, domContentLoaded, pageSize and access to Request Header and Response so you could build up har file for each tests.

Cloud vs On-premise and Public vs Private cloud is another factor.

As you can see, some of the players in this field are trying to also add performance and visual automation as part of their solution, I believe, partly, this is driven by competition between providers, to be on parity.

Ultimate differentiating, is the cost!

AWS Mac mini Instance Type

But the major disruption happening technology-wise in this area is, AWS providing mac1.metal EC2 instance type, which is a Mac mini based instance which will allow you to run Xcode, iOS simulators for native or mobile web. But it is billed at $1.083/hr and has minimum host allocation and billing duration of 24 hours. So just to start one Mac mini EC2 instance would cost $25.992 and $1.083/hr after that. This is besides AWS device farm, which provides real device support, both iOS and Android, but has the limitation of small number test automation frameworks it supports.

Free Open Device Farms

Another disruption in cross device and browser domain is the emerging concept of open device lab, where anyone could make their own device available in cloud for testing, for free. This is initiated as a community based project.

IoT (Internet of Things)

IoT device testing space is also emerging to be in the mix, with very few players.

New Players

Companies that are in the Application Performance Monitoring market, are also becoming players in Cross Browser/Device field, from code-less, UI automation based monitoring to getting involved in CI/CD pipeline.

Intriguing

Analytics Platform companies like Glassbox, whose service includes recording every user actions and using that to visually playback how users are using a website, have good chance to become players into this domain as well. Main reason is, they have the relevant data (which is gold), they have every steps of users’ actions, all the elements they clicked, their visual equivalent, they just need to aggregate and stick everything together, convert this to automation code (Code Generation), give a platform to run this against cross browser, cross device or allow users to connect to external cross browser vendors.

This domain has evolved a lot but the test domain haven’t caught up with it yet. This is a niche market which requires its own persona, infrastructure setup and mind set. There are few or no real players in this domain.

Anything and everything is driven my ML models nowadays , but how would you verify if your ML model driven product is behaving the way it is expected to. For example, if you deploy an ML model to influence which product to show in the top of your shopping page, if the behaviour is not tested, it could possibly end up as a disaster. It is not sufficient to test just the model, because there might be other factors which alters the behaviour in your shopping page. So it is important to test what the users would see. There are lots of things that could go wrong here, bad data, wrong fine tuning parameter, overfitting, etc. This is especially true, if the updating of ML model data is fully automated as a feedback loop.

A generic framework to check if the behaviour matches the expectation, would be a good addition.

When you run automated tests and store the results, after a period of time, you will have a long history of all your automation runs, failures, test cases, how they failed, especially error messages. This is a gold mine. If you could convert this error messages to an ML model that could classify them in to different categories, put it behind an API that could accept a string, what you have is a gene that will automatically categorise your automation failures based on the error messages. If you can get it to 90% above accuracy and integrate it with your automation framework, you don’t have to manually analyse your tests any more.

If the model is 90%+ accurate, not only you can classify your failures into different categories, you could call Jira api and create a ticket, if the classification says an error is a defect. Imaging the amount of time and $$ that could be saved here.

This requires a generic ML model, that anyone could use, by using their own error messages to create the model. And a system to connect to this model and use it.

This has players in the market, as you could see from the first section.

This is going to be our future, most countries like U.K are already setting a goal to phase out non-electric cars by 2035. More than electrics, the self driving feature is what is of interests here. How can you test if a self driving car can distinguish all thousands of combinations of images around it as it cruises at the speed of 70mph or more. You don’t need the car itself to test this, but just the cameras and the system that does the work and an infrastructure where the behaviour of the car itself can be simulated.

What I have learned is, Continuous Integration / Continuous Delivery is going to be the norm, for which you need Shift Left as opposed to testing in production, which means, when a change is committed, it needs to be production ready.

Adding Cloud based solution to the mix, with lots of cost effective alternatives, evolving on a weekly basis, transitioning or switching from one platform to other should be a straightforward.

We are at a place where we need everything in one place, easily integrable via apis . Imagine, as part of your CI/CD pipeline, you want to run some of your tests on Selenium Grid, some on iOS simulator running in AWS mac1.metal and some on real devices from a cross browser & device provider, some on a visual regression platform, some cases on a performance, load testing platform, some on a chaos testing platform, view the report of all the run from another intelligent reporting platform and manage all this from one place? This is truly a missing piece, AWS of Testing.

Look out for Part II..

While the list is ever growing, we might have missed some accidentally. Please feel free to fork the gist or message me any corrections, we’ll be more than happy to incorporate your changes!

List of Tools

https://gist.github.com/digy4tech/18b19bb37b5d4d02c6b091eaf08e73cd